このガイドでは、Discourse AI の「Forum Researcher」エージェントについて、その仕組みと、フォーラムコンテンツの詳細分析を行うための設定方法について解説します。

必要なユーザーレベル: 管理者(有効化と設定用)、すべてのユーザー(アクセスが許可されている場合の対話用)

Forum Researcher エージェントの理解と活用

Discourse AI プラグインには、Forum Researcher というエージェントが含まれています。これは、フォーラム内のコンテンツを詳細に調査するために設計された強力なツールです。このエージェントを活用することで、インサイトの発見、議論の要約、コミュニティ全体のトレンド分析などが可能になります。

概要

本ドキュメントでは、以下の事項について解説します。

- Forum Researcher エージェントの動作原理

- Forum Researcher の設定手順

- エージェントとの対話におけるベストプラクティス

- Forum Researcher と標準的なフォーラム補助ツールの違い

- 適切な大規模言語モデル(LLM)の選択に関するガイダンス

- 調査タスクのデバッグに関するヒント

- エージェントの現在の制限事項

動作原理

Forum Researcher エージェントは、専用のResearcherツールを使用します。このツールは以下のように設計されています。

- フォーラムコンテンツへのアクセス: フォーラムのさまざまなセクションを読み取ることができます。

- 高度なフィルターの適用: 柔軟なフィルターシステムにより、関連する情報を正確にターゲットにできます。コンテンツは以下のように指定可能です。

- 特定のカテゴリ(例:

category:supportまたはcategories:support,feedback) - タグ(例:

tag:bugまたはtags:bug,regression) - ユーザーまたはグループ(例:

username:sam、usernames:sam,jane、group:moderators、groups:moderators,admins) - 投稿またはトピックタイトル内のキーワード(例:

keywords:regression,bug、topic_keywords:feature,request) - 投稿の日付範囲(例:

after:2024-01-01 before:2024-06-30) - トピックの日付範囲(例:

topic_after:2024-01-01 topic_before:2024-06-30) - 特定のトピック ID(例:

topic:123またはtopics:123,456) - トピックのステータス(例:

status:open、status:closed、status:archived、status:noreplies、status:single_user) - 投稿タイプ(例:

post_type:first、post_type:reply) - 並べ替え順序(例:

order:latest、order:oldest、order:latest_topic、order:oldest_topic、order:likes) - インライン結果の制限(例:

max_results:50) - 割り当てられたトピック(Assign プラグインが有効な場合、例:

assigned_to:username、assigned_to:user1,user2、assigned_to:*、assigned_to:nobody) - フィルターは、AND 論理(スペース区切り)または OR 論理(フィルターグループ間に

ORを使用)を組み合わせて使用できます。例:category:bugs status:open after:2024-05-01 OR tag:critical usernames:sally。

- 特定のカテゴリ(例:

- LLM によるコンテンツ分析: フィルターされたコンテンツを取得した後、LLM を使用して情報を分析し、インサイトを抽出し、具体的な質問に回答したり、調査目標を達成したりします。

- 構造化されたプロセスの追従: 効率性と正確性を確保し、潜在的なコストを考慮して、Forum Researcher は以下のように設計されています。

- 理解: 調査目標を明確にするために、最初にユーザーと協力します。

- 計画: 目標に基づき、利用可能なフィルターを使用して包括的な調査アプローチを設計します。

- テスト(ドライラン): 完全な分析を実行する前に、エージェントは通常「ドライラン」を行います。これには、LLM で即座に処理することなく、フィルター条件に一致する投稿数を計算することが含まれます。その後、エージェントはカウント数をユーザーに通知します。

- 調整: ドライランの結果に基づき、投稿数が多すぎる(高額なコストや広範すぎる結果のリスク)か、少なすぎる(重要な情報を見逃す可能性)場合、エージェントはフィルターの調整を支援します。

- 実行: スコープが適切であることを確認(ドライラン後)したら、エージェントは最終的な分析を実行し、コンテンツを LLM に送信します。

- 要約: 結果を提示します。通常は Discourse Markdown を使用し、証拠として元のフォーラム投稿やトピックへのリンクを含めます。

この体系的なアプローチにより、研究者に以下のようなタスクを依頼できます。

- 「直近の四半期に ‘mobile-app’ カテゴリで最も頻繁に議論された未解決のバグを要約し、議論内で言及されている提案された解決策や回避策を特定してください。」

- 「‘New User Onboarding’ 提案トピック(リンク)に対する賛成と反対の主な論点を特定し、それぞれの側面の主要な支持者をリストアップしてください。」

- 「過去 1 年間の ‘documentation-team’ グループの活動を確認し、how-to 記事への主要な貢献についての報告を作成し、特に肯定的なフィードバックを受けたチュートリアルを強調してください。」

Forum Researcher の設定

Forum Researcher は、LLM コストが発生する可能性があるため、デフォルトでは無効になっています。

- エージェントの有効化: 管理者 → AI → エージェント に移動してアクティブにします。

- アクセス制御: LLM コストを管理するために、このエージェントを特定のグループに限定することを強く推奨します。また、より細かい制御のために AI クォータ を使用することもできます。

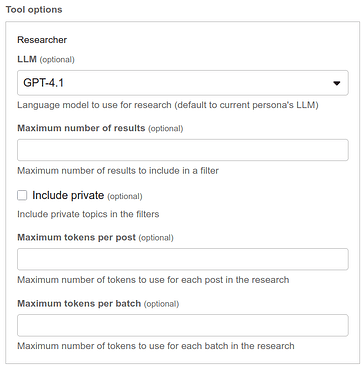

有効化後、ツールにはいくつかの設定オプションがあります。

- LLM: 調査用の特定の LLM を選択します。デフォルトは現在のエージェントの LLM です。このオプションにより、品質とコストのバランスを取ることができます。

- 最大結果数: コストを制御するために、クエリごとに処理される投稿数を制限します。デフォルトは 1000 です。

- プライベートを含める: ユーザーの権限を使用して、セキュリティが設定されたカテゴリ内を検索することを許可します。

- 投稿あたりの最大トークン数: トークンコストを節約するために長い投稿を切り捨てます。デフォルトは 2000 トークンで、最小は 50 です。

- バッチあたりの最大トークン数: LLM に送信されるデータチャンクサイズを制御します。大きなコンテキストウィンドウを持つ LLM や、焦点を維持したい場合に便利です。8000 以下に設定されている場合、LLM の最大プロンプトトークン数から 2000 トークンのバッファーを引いた値がデフォルトになります。

対話におけるベストプラクティス

コストを管理しながら Forum Researcher を最大限に活用するには、以下の点に注意してください。

- 目標を具体的に: 始める前に、何を明らかにしたいかを明確に定義してください。エージェントは、明確な目標がある場合に最も効果的に機能します。

- ドライラン後にスコープを確認: エージェントは通常、最初に「ドライラン」を行い、リクエストに基づいて発見された投稿数を通知します。この数字に注意を払ってください。数値が高すぎる(高額なコストや焦点のぼやけた結果のリスク)か、低すぎる(重要な情報を見逃す可能性)場合は、完全な分析を実行する前に、エージェントと協力してフィルターを調整してください。

- フィルターの反復調整: 最初のドライランが適切な情報をターゲットにしていない場合は、エージェントと協力してフィルター条件を調整します。より具体的なキーワードを追加したり、日付範囲を狭めたり、カテゴリやタグを指定したりしてください。

- クエリの統合: エージェントは、単一の調査実行で複数の関連する目標を処理するように設計されています。関連する質問を 1 つの包括的な調査リクエストにグループ化してエージェントに送信してみてください。

標準的なフォーラム補助ツールおよび関連ツールとの関係

Forum Researcher エージェントは、Search や Read などの標準ツールを使用する一般的なフォーラムヘルパーとは異なります。

-

標準の

SearchおよびReadツール:Searchツールは、主に関連するトピックを特定します。これは、投稿コンテンツや他の条件(タグ、カテゴリなど)に対してキーワードをマッチさせることで行われます。一致するトピックごとに、完全な投稿内容ではなく、リンクと関連投稿からの短いスニペットが返されます。Readツールは、Searchによって特定された特定のトピック(またはその中の選択された投稿)の完全なコンテンツにアクセスするために使用されます。- これらのツールは、ターゲットを絞った取得のために連携して機能します。

Searchがトピックを見つけ、Readがその内容を消化します。

-

Forum Researcher の

researcherツール:- 直接的かつ深層のコンテンツ分析:

researcherツールはトピックを特定するだけでなく、包括的なフィルター条件に一致する(設定された最大結果数までの)多数の投稿の完全なコンテンツを直接処理して分析します。 - 高度なフィルタリングと合成: より複雑なフィルター言語を使用して、フォーラム全体(数百のトピックにまたがる可能性あり)から投稿のデータセットを構築し、このデータセット全体から情報を合成して複雑な質問に回答します。これは、トピックを一つずつ読むこととは根本的に異なります。

- 直接的かつ深層のコンテンツ分析:

要するに、フォーラムヘルパーがトピックを特定(スニペット表示)するために Search を使用し、一つを掘り下げるために Read を使用するのに対し、Forum Researcher は、より深く統合されたインサイトを明らかにするために、多数の投稿の実際のテキスト上で広範な分析を同時に実行します。

どの LLM を使用すべきか?

LLM テクノロジーは急速に進化しており、モデルの能力とコスト効率性は常に向上しています。Forum Researcher の開発中、Gemini 2.5 Flash、Gemini 2.5 Pro、GPT-4.1、Claude 4 Sonnet などのモデルは、複雑な調査計画に対して優れた結果を提供しました。

最適な選択は、特定のニーズによって異なります。

- 高品質でニュアンスのある分析: より高度なモデルが好ましい場合がありますが、通常コストは高くなります。

- 広範な概要またはコストに敏感なタスク: 高速で経済的なモデルが非常に効果的です。

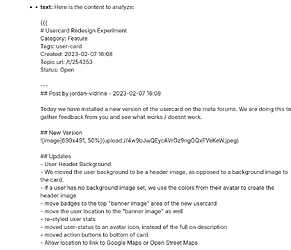

以下は、Discourse 内部での非常に具体的で複雑なクエリに対する、ある時点でのテスト例です。

機能カテゴリのトップ 1000 のオープントピック(最初の投稿のみを基準に「いいね」順)をすべて確認し、以下の executive report を作成してください。

- CDCK が構築すべきトップ 20 の機能

- CDCK が最も簡単に構築できるトップ 20 の機能

- 明らかな重複

- 非常に定義が不十分な事項

質問は不要です。ただ調査を実行してください。

ハイブリッド例: ドライバーは Gemini 2.5 Pro、Researcher LLM は Gemini 2.0 Flash

ハイブリッド例

調査のデバッグ

Discourse では、ai_bot_debugging_allowed_groups サイト設定にグループを追加することで、高度な AI デバッグを有効にできます。これにより、LLM に送信される実際のペイロードを確認できます。

制限事項

現在、研究用 LLM に画像を送信するオプションはありません。これは今後のバージョンで検討されます。

よくある質問 (FAQ)

-

Forum Researcher はすべての Discourse プランで利用可能ですか?

Forum Researcher は Discourse AI プラグインの一部であり、セルフホストサイトおよびエンタープライズホスティングプランで利用可能です。 -

Forum Researcher はセキュリティが設定されたカテゴリのコンテンツにアクセスできますか?

はい、設定で「プライベートを含める」オプションが有効になっている場合、かつエージェントと対話するユーザーがそれらのカテゴリにアクセスするための必要な権限を持っている場合にアクセス可能です。 -

Forum Researcher の使用コストをどのように制御できますか?

- 信頼できる特定のグループへのアクセスを制限する。

- 「最大結果数」および「投稿あたりの最大トークン数」の設定を使用して処理を制限する。

- コスト効果の高い LLM を選択する。

- 完全な調査を実行する前に「ドライラン」の見積もりを注意深く確認する。

- AI クォータを利用する。