本指南介绍了 Discourse AI 中的论坛研究员(Forum Researcher)代理,包括其工作原理以及如何配置它以进行深入的论坛内容分析。

所需用户级别:管理员(用于启用和配置),所有用户(用于交互,如果已授予访问权限)

理解和使用论坛研究员代理

Discourse AI 插件包含论坛研究员代理,这是一个强大的工具,旨在对您论坛内的内容进行深入调研。该代理可帮助您发现洞察、总结讨论并分析社区趋势。

摘要

本文档将涵盖以下内容:

- 论坛研究员代理的工作原理。

- 配置论坛研究员的步骤。

- 与该代理交互的最佳实践。

- 论坛研究员与标准论坛辅助工具的区别。

- 如何选择合适的大语言模型(LLM)的指导。

- 研究任务的调试技巧。

- 该代理当前的局限性。

工作原理

论坛研究员代理使用专用的研究员(Researcher)工具。该工具旨在:

- 访问论坛内容:它可以浏览您论坛的各个部分。

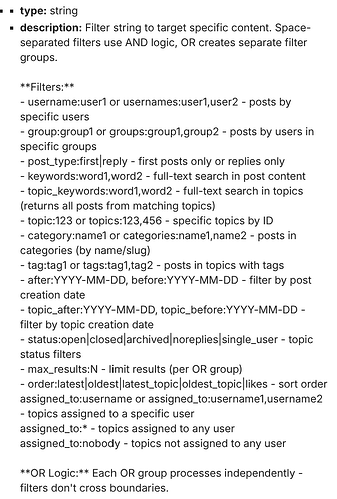

- 应用高级过滤器:灵活的过滤系统允许工具精确定位相关信息。您可以通过以下方式指定内容:

- 特定类别(例如

category:support或categories:support,feedback) - 标签(例如

tag:bug或tags:bug,regression) - 用户或用户组(例如

username:sam,usernames:sam,jane,group:moderators,groups:moderators,admins) - 帖子或主题标题中的关键词(例如

keywords:regression,bug,topic_keywords:feature,request) - 帖子的日期范围(例如

after:2024-01-01 before:2024-06-30) - 主题的日期范围(例如

topic_after:2024-01-01 topic_before:2024-06-30) - 特定主题 ID(例如

topic:123或topics:123,456) - 主题状态(例如

status:open,status:closed,status:archived,status:noreplies,status:single_user) - 帖子类型(例如

post_type:first,post_type:reply) - 排序顺序(例如

order:latest,order:oldest,order:latest_topic,order:oldest_topic,order:likes) - 行内结果限制(例如

max_results:50) - 已分配的主题(如果启用了 Assign 插件,例如

assigned_to:username,assigned_to:user1,user2,assigned_to:*,assigned_to:nobody) - 过滤器可以使用 AND 逻辑(空格分隔)或 OR 逻辑(在过滤器组之间使用

OR)进行组合。例如:category:bugs status:open after:2024-05-01 OR tag:critical usernames:sally。

- 特定类别(例如

- 使用大语言模型(LLM)分析内容:在检索到过滤后的内容后,它使用 LLM 分析信息、提取洞察,并回答您的具体问题或实现您的研究目标。

- 遵循结构化流程:为了确保效率和准确性,特别是考虑到潜在的成本,论坛研究员的设计旨在:

- 理解:它将在开始时与您合作,明确您的研究目标。

- 规划:根据您的目标,它利用可用的过滤器设计全面的研究方法。

- 测试(试运行):在执行完整分析之前,代理通常会进行“试运行”。这涉及计算有多少帖子符合您的过滤条件,而不立即使用 LLM 处理它们。随后,代理将告知您该数量。

- 优化:根据试运行结果,如果帖子数量过多(可能导致高成本或结果过于宽泛)或过少(可能遗漏关键信息),代理可以协助您调整过滤器。

- 执行:一旦您确认范围合适(在试运行之后),代理将运行最终分析,将内容发送给 LLM。

- 总结:它展示发现结果,通常使用 Discourse Markdown 格式,并提供指向原始论坛帖子和主题的链接作为支持证据。

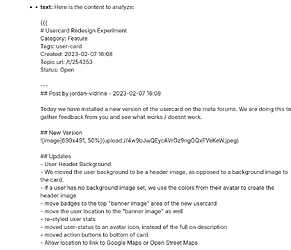

这种系统化的方法意味着您可以要求研究员执行以下任务:

- “总结’mobile-app’类别中过去一个季度讨论最频繁且未解决的漏洞,并识别讨论中提到的任何建议解决方案或变通方法。”

- “帮我找出支持和不支持‘新用户入职’提案主题(链接)的主要论点,并列出每一方的关键支持者。”

- “审查’documentation-team’用户组在过去一年中的活动,并提供一份关于他们对 how-to 文章主要贡献的报告,突出任何获得显著积极反馈的教程。”

配置论坛研究员

论坛研究员默认处于禁用状态,因为其使用可能会产生 LLM 成本。

- 启用代理:导航至 管理 → AI → 代理 以激活它。

- 控制访问权限:强烈建议将此代理限制在特定用户组内,以管理 LLM 成本。您还可以使用 AI 配额 进行更精细的控制。

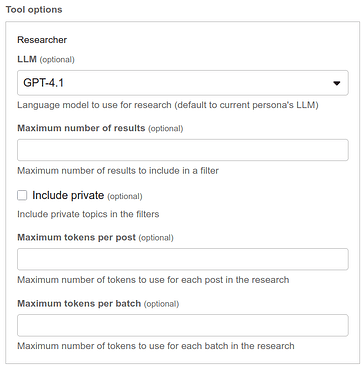

启用后,该工具有几个配置选项:

- LLM:选择用于研究的特定 LLM。默认值为当前代理的 LLM。此选项允许您平衡质量和成本。

- 最大结果数:此设置限制每次查询处理的帖子数量以控制成本。默认为 1000。

- 包含私密内容:此选项允许在安全类别中搜索,使用交互用户的权限。

- 每篇帖子最大令牌数:此设置截断长帖子以节省令牌成本。默认为 2000 个令牌,最小值为 50。

- 每批次最大令牌数:此设置控制发送给 LLM 的数据块大小。对于具有大上下文窗口的 LLM 或保持专注性非常有用。如果设置为 8000 或更低,则默认为 LLM 的最大提示令牌数减去 2000 个令牌的缓冲值。

交互最佳实践

为了在管理成本的同时充分利用论坛研究员:

- 明确目标:在开始之前,清楚地定义您想要了解的内容。当代理拥有精确的目标时,效果最佳。

- 试运行后确认范围:代理通常会先执行“试运行”,并告知您根据您的请求找到了多少帖子。请密切关注这个数字。如果数字过高(可能导致高成本或结果不聚焦)或过低(可能遗漏关键信息),请在进行完整分析之前与代理讨论优化您的过滤器。

- 迭代过滤器:如果初始试运行未能定位到正确的信息,请与代理合作调整过滤条件。添加更具体的关键词、缩小日期范围或指定类别/标签。

- 整合查询:该代理旨在在一次研究执行中处理多个相关目标。尝试将相关问题分组到一个综合的研究请求中发送给代理。

与标准论坛辅助工具及相关工具的关系

论坛研究员代理与使用标准工具(如 Search 和 Read)的通用论坛辅助工具不同。

-

标准

Search和Read工具:Search工具主要用于识别相关的主题。它通过将关键词与帖子内容及其他条件(标签、类别等)进行匹配来实现这一点。对于每个匹配的主题,它返回一个链接和来自相关帖子的简短片段,而不是完整的帖子内容。Read工具用于访问Search识别出的特定主题(或其内选定的帖子)的完整内容。- 这些工具协同工作以实现针对性检索:

Search查找主题,Read消化其内容。

-

论坛研究员的

researcher工具:- 直接、深度的内容分析:

researcher工具不仅识别主题;它直接处理并分析可能许多帖子的完整内容(最多达到配置的最大结果数),这些内容符合其综合过滤条件。 - 高级过滤与综合:它使用更复杂的过滤语言来构建来自整个论坛的帖子数据集(可能跨越数百个主题),然后综合该整个数据集的信息来回答复杂问题。这与逐个阅读单个主题有根本区别。

- 直接、深度的内容分析:

本质上,虽然论坛辅助工具使用 Search 来 pinpoint 主题(展示片段)并使用 Read 深入其中一个,但论坛研究员同时对许多帖子的实际文本进行广泛分析,以发现更深层次的综合洞察。

我应该使用哪个 LLM?

LLM 技术正在快速发展,模型在能力和成本效益方面不断改进。在论坛研究员的开发过程中,像 Gemini 2.5 Flash、Gemini 2.5 Pro、GPT-4.1 和 Claude 4 Sonnet 这样的模型为复杂的研究计划提供了出色的结果。

最佳选择取决于您的具体需求:

- 高质量、细致的分析:更先进的模型可能更合适,尽管它们通常成本更高。

- 广泛概览或对成本敏感的任务:更快、更经济的模型可能非常有效。

以下是 Discourse 内部测试针对非常具体、复杂查询的即时示例:

查看功能类别中按点赞数排序的前 1000 个开放主题(仅第一篇文章)……为我生成一份关于以下内容的行政报告:

- CDCK 应构建的前 20 个功能

- CDCK 最容易构建的 20 个功能

- 明显的重复项

- 定义非常模糊的内容

不要再问我问题,直接运行研究

混合示例:驱动模型为 Gemini 2.5 Pro,研究员 LLM 为 Gemini 2.0 Flash

混合示例

调试研究

在 Discourse 中,您可以通过将用户组添加到 ai_bot_debugging_allowed_groups 站点设置来启用高级 AI 调试。设置完成后,您将能够查看实际发送给 LLM 的负载。

局限性

目前,没有选项可以向研究 LLM 发送图像。这将在未来版本中考虑。

常见问题解答

-

论坛研究员是否适用于所有 Discourse 计划?

论坛研究员是 Discourse AI 插件的一部分,该插件适用于自托管站点以及我们的企业托管计划。 -

论坛研究员能否访问安全类别中的内容?

可以,如果在其配置中启用了“包含私密内容”选项,并且与代理交互的用户具有访问这些类别的必要权限。 -

我如何控制使用论坛研究员的成本?

- 限制访问特定的、受信任的用户组。

- 使用“最大结果数”和“每篇帖子最大令牌数”设置来限制处理量。

- 选择具有成本效益的 LLM。

- 在执行完整研究之前,密切关注“试运行”估算值。

- 利用 AI 配额。