Hice una reconstrucción esta mañana y luego intenté restaurar una copia de seguridad en el contenedor. Estoy en la versión 2.6.0.beta5 (75a893fd61), con todo dentro del contenedor.

Normalmente la restauración de la copia de seguridad funcionaría (de hecho ya lo ha hecho antes), pero hoy falló de la siguiente manera:

Starting restore: app-2020-11-06-033740-v20201009190955.tar.gz

[STARTED]

'system' ha iniciado la restauración.

Marcando la restauración como en curso...

Asegurando que /var/www/discourse/tmp/restores/default/2020-11-06-084354 existe...

Copiando el archivo comprimido al directorio temporal...

Descomprimiendo el archivo comprimido, esto puede tardar un rato...

Extrayendo el archivo volcado...

Validando los metadatos...

Versión actual: 20201103103401

Versión restaurada: 20201009190955

Habilitando el modo de solo lectura...

Pausando Sidekiq...

Esperando hasta 60 segundos a que Sidekiq termine de ejecutar los trabajos...

Esperando a que Sidekiq termine de ejecutar los trabajos... #2

Esperando a que Sidekiq termine de ejecutar los trabajos... #3

Esperando a que Sidekiq termine de ejecutar los trabajos... #4

Esperando a que Sidekiq termine de ejecutar los trabajos... #5

Esperando a que Sidekiq termine de ejecutar los trabajos... #6

Esperando a que Sidekiq termine de ejecutar los trabajos... #7

Esperando a que Sidekiq termine de ejecutar los trabajos... #8

Esperando a que Sidekiq termine de ejecutar los trabajos... #9

Esperando a que Sidekiq termine de ejecutar los trabajos... #10

EXCEPCIÓN: ¡Sidekiq no terminó de ejecutar todos los trabajos en el tiempo permitido!

/var/www/discourse/lib/backup_restore/system_interface.rb:89:in `block in wait_for_sidekiq'

/var/www/discourse/lib/backup_restore/system_interface.rb:84:in `loop'

/var/www/discourse/lib/backup_restore/system_interface.rb:84:in `wait_for_sidekiq'

/var/www/discourse/lib/backup_restore/restorer.rb:47:in `run'

script/discourse:143:in `restore'

/var/www/discourse/vendor/bundle/ruby/2.6.0/gems/thor-1.0.1/lib/thor/command.rb:27:in `run'

/var/www/discourse/vendor/bundle/ruby/2.6.0/gems/thor-1.0.1/lib/thor/invocation.rb:127:in `invoke_command'

/var/www/discourse/vendor/bundle/ruby/2.6.0/gems/thor-1.0.1/lib/thor.rb:392:in `dispatch'

/var/www/discourse/vendor/bundle/ruby/2.6.0/gems/thor-1.0.1/lib/thor/base.rb:485:in `start'

script/discourse:284:in `<top (required)>'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli/exec.rb:63:in `load'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli/exec.rb:63:in `kernel_load'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli/exec.rb:28:in `run'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli.rb:476:in `exec'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/vendor/thor/lib/thor/command.rb:27:in `run'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/vendor/thor/lib/thor/invocation.rb:127:in `invoke_command'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/vendor/thor/lib/thor.rb:399:in `dispatch'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli.rb:30:in `dispatch'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/vendor/thor/lib/thor/base.rb:476:in `start'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/cli.rb:24:in `start'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/exe/bundle:46:in `block in <top (required)>'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/lib/bundler/friendly_errors.rb:123:in `with_friendly_errors'

/usr/local/lib/ruby/gems/2.6.0/gems/bundler-2.1.4/exe/bundle:34:in `<top (required)>'

/usr/local/bin/bundle:23:in `load'

/usr/local/bin/bundle:23:in `<main>'

Intentando revertir...

No fue necesario revertir

Limpieza de archivos...

Eliminando el directorio temporal '/var/www/discourse/tmp/restores/default/2020-11-06-084354'...

Reanudando Sidekiq...

Deshabilitando el modo de solo lectura...

Marcando la restauración como finalizada...

Notificando a 'system' sobre el fin de la restauración...

¡Finalizado!

[FALLÓ]

Restauración completada.

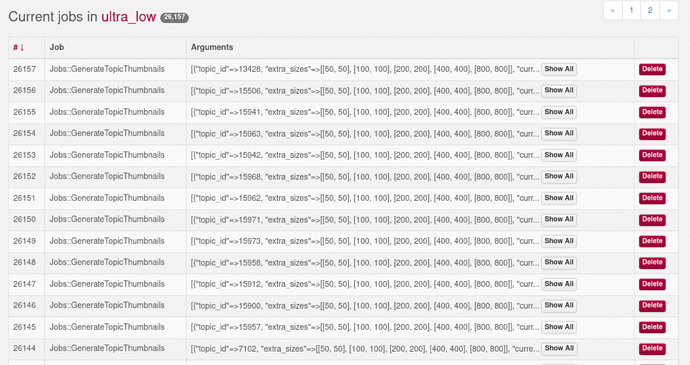

Después de eso, tengo procesos de Ruby usando el 100% de la CPU durante horas. Este proceso se describe así:

# ps aux | grep sidekiq

discour+ 141 100 5.0 9302596 401484 ? SNl 06:34 127:46 sidekiq 6.1.2 discourse [5 of 5 busy]

Si detengo y reinicio el contenedor, este Sidekiq vuelve al 100%. sidekiq.log está vacío y production.log no me muestra mucho.

¿Cómo puedo averiguar qué está haciendo este Sidekiq? ¿Alguien más ha encontrado problemas con la restauración de copias de seguridad en esta versión?