Puoi impostare un gruppo che può eseguire la query. Si trova nelle impostazioni di ogni query.

Sì, dopo aver autorizzato il gruppo, come può un utente di quel particolare gruppo eseguire la query? Non vedono i plug-in. Da dove trovano la query?

Invia loro il link alla query.

Hai provato come utente normale?

![]() Questo plugin è ora incluso nel core di Discourse come parte di Bundling more popular plugins with Discourse core. Se stai ospitando autonomamente e utilizzi il plugin, devi rimuoverlo dal tuo

Questo plugin è ora incluso nel core di Discourse come parte di Bundling more popular plugins with Discourse core. Se stai ospitando autonomamente e utilizzi il plugin, devi rimuoverlo dal tuo app.yml prima del tuo prossimo aggiornamento.

Questo non mi era affatto ovvio. Ci è voluto un po’ per capirlo. Forse c’è un modo per renderlo più chiaro.

Domanda: È vero che solo gli amministratori possono accedere al Data Explorer? Non riesco a trovare da nessuna parte che questo sia dichiarato esplicitamente, né riesco a trovare un’impostazione. È solo codificato in modo fisso?

Solo gli amministratori possono creare query poiché l’accesso all’intero database è una grave preoccupazione per la sicurezza (indirizzi email, indirizzi IP e così via).

Puoi rendere le query disponibili ai membri di un certo gruppo, cosa che sembra tu abbia già capito.

Grazie. I moderatori possono già vedere le singole email e gli indirizzi IP, quindi non ero sicuro del motivo della restrizione aggiuntiva. Ci fidiamo dei nostri moderatori tanto quanto dei nostri amministratori.

Forse non erano buoni esempi. Potrebbero, ad esempio, inviare un link di accesso a un utente, quindi recuperare la chiave dal database ed effettuare l’accesso come quell’utente (cosa che gli amministratori possono fare, ma i moderatori non credo?). Avrebbero anche accesso a tutti i valori delle impostazioni e potrebbero ottenere segreti (ad esempio, chiavi API AI, chiavi per l’autenticazione, ecc.).

Se ti fidi di loro come amministratori, allora puoi semplicemente renderli tutti amministratori. ![]()

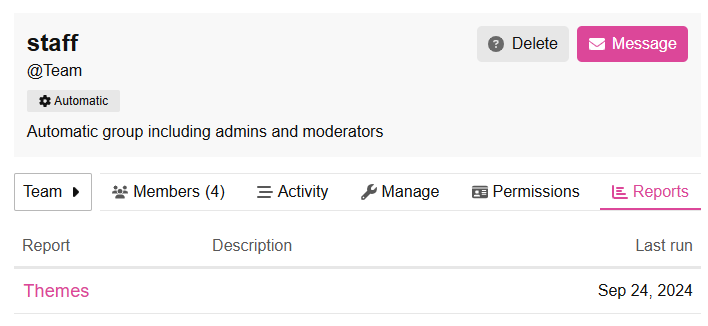

Piccola modifica all’interfaccia utente:

Se avessi appena cliccato sulla matita per modificare il titolo del report e ti trovassi di fronte a questo, ha davvero senso cliccare sul pulsante X per salvare la modifica? Di solito X non significa annullare? Non uso spesso il DE, ma quando lo faccio e ho bisogno di modificare il titolo, finisco sempre per cliccare sull’icona < pensando che salverà. Non lo fa. Ti porta fuori dal report del tutto.

Forse sono solo ottuso, ma non avrebbe molto più senso se fosse lo stesso (forse identico?) all’interfaccia di modifica del titolo del post?

Ho iniziato un argomento su questo un po’ di tempo fa

Oh, sì. È ESATTAMENTE quello di cui sto parlando. Mi dispiace vedere che non c’è stata alcuna reazione. Sembra un problema ovvio.