Continuando la discussione da Limita post per argomento / utente / mese:

Ciao ![]() Grazie per il tuo interesse

Grazie per il tuo interesse ![]()

Per maggiore contesto: Nel 2021, il mio primo progetto con un forum in Kazakistan non ha avuto successo perché la maggior parte dei residenti preferisce usare Telegram o WhatsApp. L’introduzione di plugin per il bot Discourse AI e Chatbot ha dato al mio sito web una seconda possibilità, ma ora è focalizzato esclusivamente sulla comunicazione con l’intelligenza artificiale (modelli di query categorizzati, personaggi del bot, ecc.).

- Per quanto riguarda il controllo della spesa dei token: sarebbe auspicabile avere un pannello separato di statistiche/impostazioni per monitorare e gestire la spesa dei token a seconda del personaggio del bot (GPT3, 3.5, 4, 4.5t e/o Assistente Composer) con cui si interagisce. Come amministratore, nel corso di un mese di utilizzo di prova, ho già bruciato token per query di ChatGPT per oltre $70, il che rappresenta una spesa significativa per me. Ora, volendo fornire accesso ai bot agli utenti normali, inizio a preoccuparmi del mio budget, che è difficile da controllare.

Immaginiamo una situazione in cui concedo l’accesso all’IA a un gruppo specifico di utenti e dico: “Usatelo”. Supponiamo che un utente generi abbastanza query in un giorno da esaurire il mio budget. Ora, supponiamo che un altro utente provi a fare una query all’IA e… non riceva risposta (nulla). Il secondo utente potrebbe non capire perché il bot non ha risposto, presumere che il servizio non funzioni correttamente e optare per altri servizi.

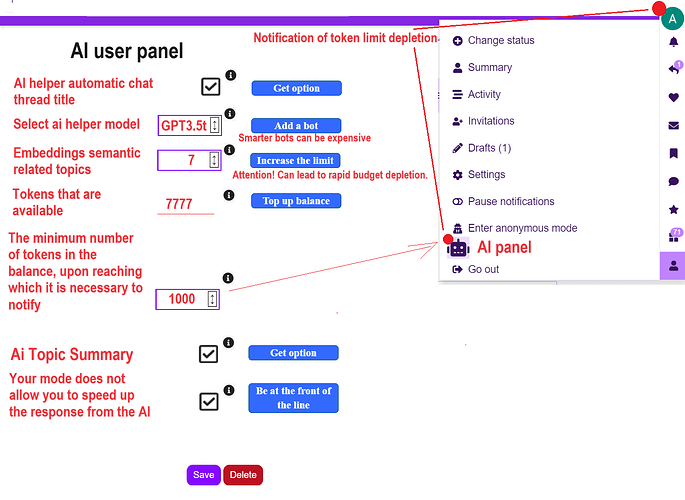

Per questo, sarebbe appropriato avere un pannello separato nella scheda utente dove ogni utente (amministratore/moderatore/utente normale) può monitorare e controllare la spesa dei token, la temperatura del bot, il top (anche se questo può essere inserito nell’editor di post, vedi diagramma sottostante) e altre impostazioni di fine-tuning.

Ad esempio, vorrei impostare una soglia di spesa per me stesso, e quando raggiunta, ricevere una notifica per rifornire il budget/token. Poiché diversi modelli di IA possono differire nel costo dei token consumati, vorrei la possibilità di limitare i token per ogni bot per me stesso e per altri gruppi di utenti. Ogni utente dovrebbe essere in grado di gestire autonomamente il limite di token allocato a sua discrezione, simile a quanto può fare un amministratore. Sarebbe anche utile fornire ad alcuni gruppi di utenti (moderatori, TL4) la capacità di affinare le impostazioni di generazione (temperatura, TOP ![]() , ecc.).

, ecc.).

Ad esempio, invece di definire il valore massimo di argomenti semanticamente correlati alle embedding per tutti gli utenti, sarebbe pratico fornire questi limiti a seconda del gruppo di utenti. Così, al gruppo Staff potrebbe essere dato un massimo di 7, agli utenti normali 3, e così via. Ogni utente dovrebbe avere la possibilità di impostare questi valori nel pannello utente nel proprio account. Questo approccio democratizzerebbe l’uso dell’IA e la capacità di controllare i limiti di token allocati a ciascun utente.

Ad esempio, ai helper automatic chat thread title potrebbe anche essere determinato in base al gruppo di utenti, dando a ciascun utente la possibilità di abilitare/disabilitare questa funzione nel pannello utente. ai helper model potrebbe anche essere lasciato alla scelta dell’utente in base al gruppo. Se do al Gruppo-A la possibilità di scegliere tra GPT4t e GPT3.5t, ognuno di loro potrebbe fare la scelta in modo indipendente.

Sarebbe anche possibile aggiungere la capacità per i gruppi privilegiati di avere le proprie query prioritarie e inviate all’LLM in cima alla coda.

Ho cercato di illustrare questo in modo più dettagliato (ho realizzato rapidamente l’illustrazione, per favore non giudicatela duramente):

Nota: Nell’immagine sopra, ho cercato di riflettere le possibili funzionalità proposte per gli utenti normali. Queste funzionalità potrebbero essere bloccate, e per renderlo chiaro all’utente, sarebbe appropriato avere pulsanti per attivare le funzionalità/aumentare i limiti/aggiungere un bot. Questi pulsanti sono evidenziati in blu, e cliccando su uno qualsiasi di questi pulsanti si reindirizzerebbe l’utente a una pagina con un invito a unirsi a un gruppo privilegiato per maggiori funzionalità nell’interazione con l’IA.

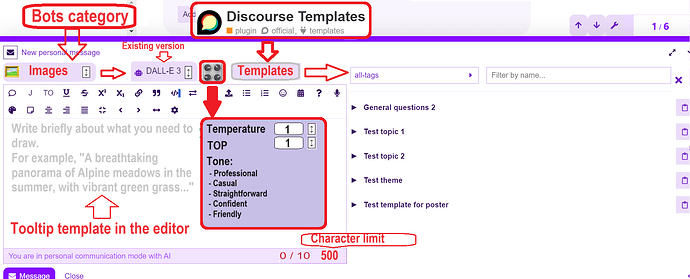

2) Nell’editor, suggerisco:

-

Categorizzare i bot per tipi (Lavorare con immagini, testo, audio, ecc.) e impostazioni aggiuntive per le query (vedi punto 1 sopra) all’interno dell’interfaccia Composer.

-

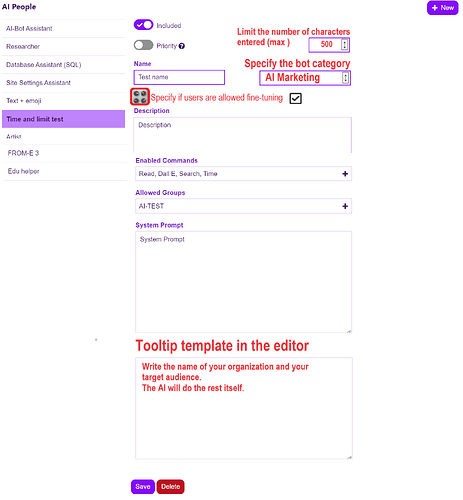

Aggiungere la possibilità di limitare il numero di caratteri per una query in base al personaggio del bot (come una delle leve per ridurre il carico del server) o al gruppo di utenti. Ho discusso qualcosa di correlato qui.

-

La possibilità di inserire un modello di query utilizzando il plugin esistente Discourse Templates o possibilmente una futura modifica (da utilizzare nei messaggi personali) attualmente in fase di sviluppo: Modelli di Modulo Sperimentali.

-

La possibilità di inserire un modello di suggerimento nell’area di immissione del testo (simile ai modelli di tema delle categorie nelle impostazioni delle categorie).

Ecco un esempio di illustrazione:

Nota: Sarebbe opportuno riflettere il limite di caratteri inseriti in fondo all’editor (come mostrato) nell’immagine.

Opzioni aggiuntive (oltre alle impostazioni API) in AI Persona Editor for Discourse, che verranno poi visualizzate nell’editor dei messaggi:

PS. In questi giorni, non mi sono sentito molto bene (sono malato) e alcuni dei miei suggerimenti potrebbero essere un po’ sparsi e non del tutto chiari. Sono un nuovo arrivato in Discourse, non ho conoscenze di programmazione e trovo difficile comprendere le informazioni su questo forum in lingua inglese, dove i post contengono spesso termini specifici. Pertanto, riconosco che le mie idee (proposte) potrebbero a volte essere un po’ assurde, non allineate con alcuni vincoli tecnici di Discourse. Capisco anche che il team potrebbe avere la propria roadmap di progetto per il plugin, che potrebbe non necessariamente allinearsi con le mie opinioni. Tuttavia, ho deciso di scrivere questo post perché credo che la rivoluzione dell’IA attirerà molti utenti verso tali servizi, e Discourse ha già tutte le capacità tecnologiche per interagire con l’IA, superando la maggior parte dei progetti emergenti sul mercato (il fatto che OpenAI utilizzi Discourse per il suo forum la dice lunga). Quindi, è meglio dire che non dire. A questo proposito, considerate la mia proposta come una prospettiva esterna, un suggerimento da un utente comune (che è spesso abituato ai social network e ai messaggeri) che desidera chiarezza e funzionalità di interazione, che i social network e i messaggeri spesso non hanno.

Edit. Capisco che l’implementazione di tale funzionalità possa richiedere grandi costi di manodopera e finanziari (che non tutti gli sponsor possono sostenere). Potrebbe valere la pena mettere tali proposte ai voti e/o organizzare il crowdfunding.